Build a Better Digital Experience

Balance flexibility and security with Acquia's open, composable digital experience platform (DXP).

Govern content and assets

Engage and convert customers

Achieve operational excellence

10 Reasons to Choose Acquia

Want to produce world-class digital experiences? Here are 10 reasons to go with Acquia.

What's New, in a Nutshell

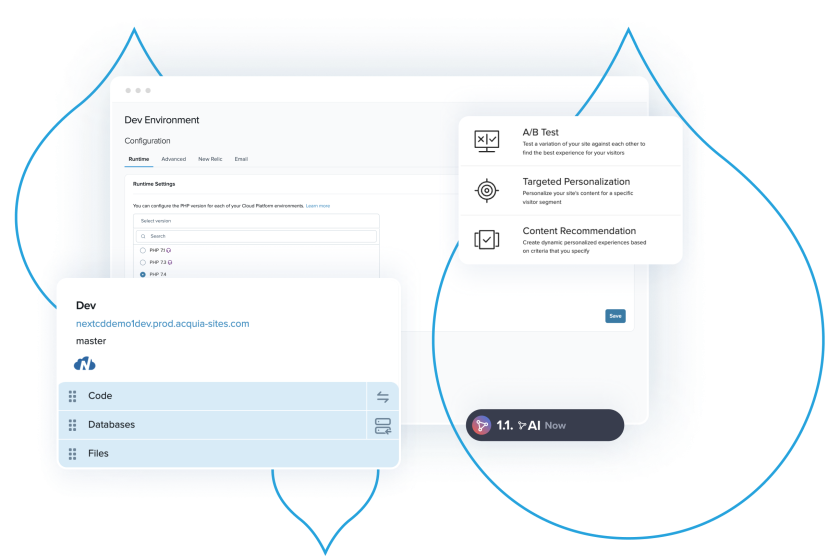

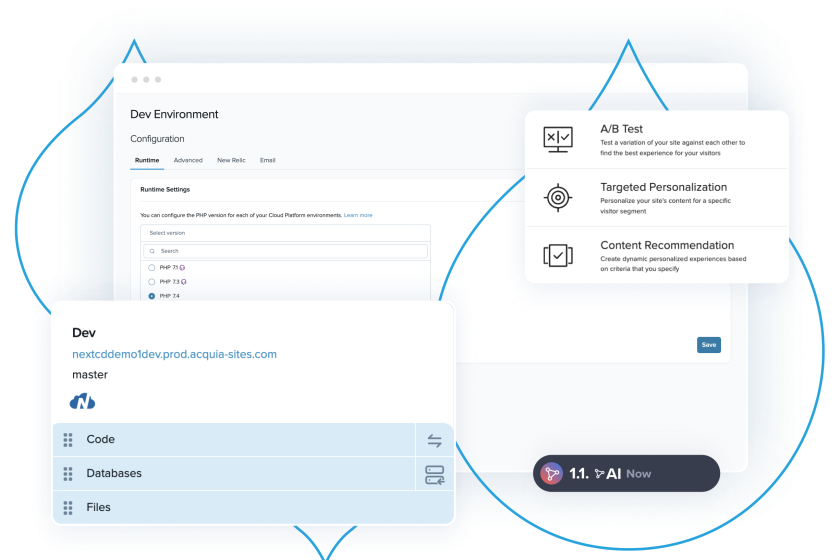

The Acquia Digital Experience Platform

Our software builds on itself to form the world’s only open digital experience platform.

Drupal Hosting

A Drupal-optimized hosting platform that meets your technical needs and provides powerful, user-friendly tools for your team to excel.

Brand Management

Manage diverse assets across teams, tools, and channels from a single source to power consistent customer experiences.

Customer Data Management

The data you need to give your customers the experiences they want. Your central hub for customer data.

Content Management

Deliver content that creates head-turning customer experiences.

The Results Are in

Leading global market researchers took a look at our digital experience platform solutions and here's what they found.

The Forrester Wave™: Digital Asset Management Systems, Q1 2024

Acquia brings a flexible and feature rich DAM.

2024 Gartner® Magic Quadrant™ for DXP

Acquia Named a Leader for DXP for the

Fifth Year Running!

Payback in Less Than Six Months; 589% ROI Over Three Years.

This Total Economic Impact™ (TEI) study by Forrester examines the ROI companies have seen with Acquia CDP.

Acquia Named a Leader in Digital Experience by G2